Petabyte-Scale Data Pipelines with Docker, Luigi and Elastic Spot Instances

This is the first article in a series that describes how we built a new data-intensive product, AdRoll Prospecting, using an architecture based on Docker containers.

We organized a meetup about this topic with Pachyderm.io. You can watch a recording of our meetup talk here:

and see the slides here:

We will elaborate different aspects of the architecture in upcoming blog posts. The one below will focus on Docker.

A Modern Data Driven Product

On June 17th, we launched a new product, AdRoll Prospecting, to public beta. A remarkable thing about the launch is that the product was built from scratch by a small engineering team of six people, in about six months, and it was released on time.

The product does something that is practically the Holy Grail of marketing: The core of AdRoll Prospecting is a massive-scale machine learning model that is able to predict who is most likely interested in your product, amongst billions of cookies AdRoll knows something about, and thus can find new customers for your business.

A modern, data-driven product like Prospecting is not only about machine learning. We provide also an easy-to-use dashboard (built using React.js) that allows you to view performance of your prospecting campaigns in detail. Behind the scenes, we are connected to AdRoll’s Real-Time Bidding engine, and we have dozens of checks and dashboards monitoring the health of the product internally, so we can be proactive about any issues affecting customer accounts.

Thanks to our experience with AdRoll’s existing retargeting product, we had an idea of what building a complex system like this would entail. When we started building AdRoll Prospecting, we were able to look back at the lessons learned, and think how we could build a flexible and sustainable backend architecture for a massively data-driven product like this as quickly as possible without sacrificing robustness or cost of operation.

Managing Complexity

We have been very happy with the result, which is the motivation for this series of blog articles. Not only has it allowed us to build and release the product on time, but we are planning to migrate many existing workloads to the new system as well.

Probably the most important feature of the new architecture is its lack of features, i.e. simplicity. Knowing that the problem we are solving is so complex, we did not want to complicate it further with a framework that would force us to model the problem in terms of a framework.

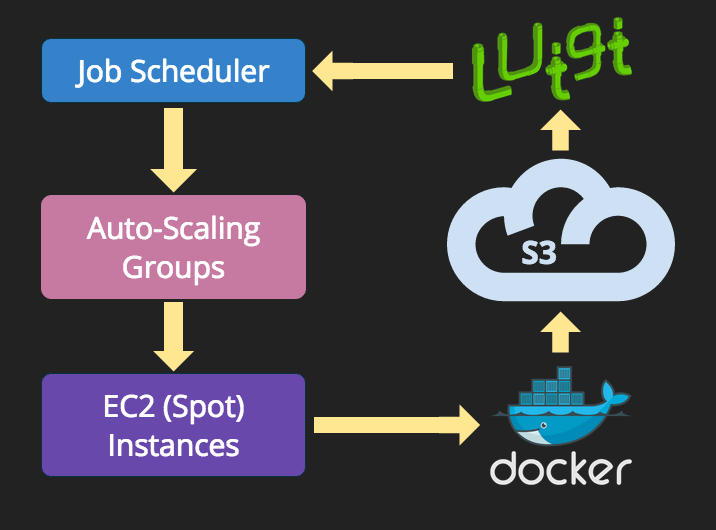

Our architecture is based on a stack of three complementary layers, which heavily rely on well-known, battle-hardened components:

-

At the lowest level, we use AWS Spot Instances and Auto-Scaling Groups to provide computing resources on a demand basis. Data is stored in AWS Simple Storage Service (S3). We have built a simple in-house job queue, Quentin, so we can leverage custom CloudWatch metrics to trigger scaling based on the actual length of the job queue.

-

We orchestrate a complex graph of interdependent batch jobs using Luigi, a Python-based, open-source tool for workflow management.

-

At the highest level, each individual task (batch job) is packaged as a Docker container.

This stack allows anyone to build new tasks very quickly using Docker, define their dependencies in terms of inputs and outputs using Luigi, and get them executed on any number of EC2 instances without having to worry about provisioning thanks to our scheduler and auto-scaling groups, as illustrated below.

.

.

This seemingly simple architecture makes a vast amount of complexity manageable. The Docker containers encapsulate jobs written in seven different programming languages. Luigi is used to orchestrate a tightly connected graph of about 50 of these jobs, and Quentin and Auto-Scaling Groups allow us to execute the jobs on an elastic fleet of hundreds of the largest EC2 spot instances in a very cost-effective manner.

The main benefit of embracing this heterogeneous, bazaar-like approach is that we can safely use the most suitable language, instance type, and distribution pattern for each task.

Old New Paradigm

Containerized batch jobs have been used for decades. Mainframes have pioneered batch jobs and virtualization since the 1960s. Outside mainframes, for example even by the the early 2000s, Google was isolating batch workloads using operating-system level virtualization using their in-house system, Borg. A few years after, this approach became more widely available using open-source tools such as OpenVZ and LXC, and later with managed services such as Joyent Manta that is based on Solaris Zones.

Containers solve three tricky issues in batch processing, namely:

-

Job packaging - a job may depend on a multitude of third party libraries that have dependencies of their own. In particular, if the job is written in a scripting language, such as Python or R, encapsulating the whole environment in a self-contained package is non-trivial.

-

Job deployment - packaged jobs need to be deployable on a host machine, and they need to be able to execute on the host without altering system-wide resources.

-

Resource isolation - if multiple jobs execute on the same host simultaneously, they must share resources nicely and they must not interfere with each other.

All these issues have been technically solvable using existing virtualization techniques for decades prior to Docker. What happened with Docker is that creating containers became so easy, and socially acceptable, that today it is realistic to expect that every analyst, data scientist, and junior software engineer is able to package their code in a container on their laptop.

A result of this is that we can allow, and even encourage, each user of the system to use their favorite, most appropriate tools for the job, instead of learning a new language or computing paradigms such as MapReduce, whatever makes them most productive. Each person is naturally responsible for packaging their jobs using Docker, and fixing them if anything fails in the container.

The result is not only faster time to market, thanks to a more efficient use of different skillsets and battle-hardened tools such as R, but also a feeling of empowerment across the organization; everyone can access data, test new models, and push code to production using the tools they know best.

Good Behavior Expected

Containerized batch jobs are not only about peace, love, and continuous deployment. We expect jobs to adhere to certain ground rules.

The basic pattern that most of our jobs follow is that they only ingest immutable data from S3 as their input, and produce immutable data in S3 as their output. If the job sticks with this simple pattern, it becomes idempotent and side-effect free.

In effect, each container becomes a function in the sense of functional programming. We have found that it is very natural to write containerized batch jobs with this mindset, from the simplest shell scripts to the most complex data manipulation jobs.

Another related requirement is that jobs should be atomic. We expect that jobs produce a _SUCCESS file, similar to Hadoop, upon successful completion. Operations on single files are atomic in S3, so this requirement is easy to fulfill. Our task dependencies are set up with Luigi so that the output data is considered valid only if the success file exists, so partial results are not a concern.

We have found this straightforward reliance on files in S3 to be easy to explain, troubleshoot, and reason about. S3 is a nearly perfect data fabric: it is extremely scalable, has an amazing record of uptime, and it is cheap to use. The empowering effect of Docker would be much diminished if data were less easily accessible.

Next Up: Luigi

Containerized batch jobs benefit from a clear separation of concerns. Not only is it easier to write jobs this way, but it also helps to ensure that each job takes only minutes, or at most a few hours, to execute, which is crucial when dealing with ephemeral spot instances.

An inevitable result of this is that the system becomes a complex hairball of interdependent jobs. Luigi has proven to be a direct way to manage this dependency graph, which is the topic of a future blog post.